The Challenge

What MetekuAI Was Facing

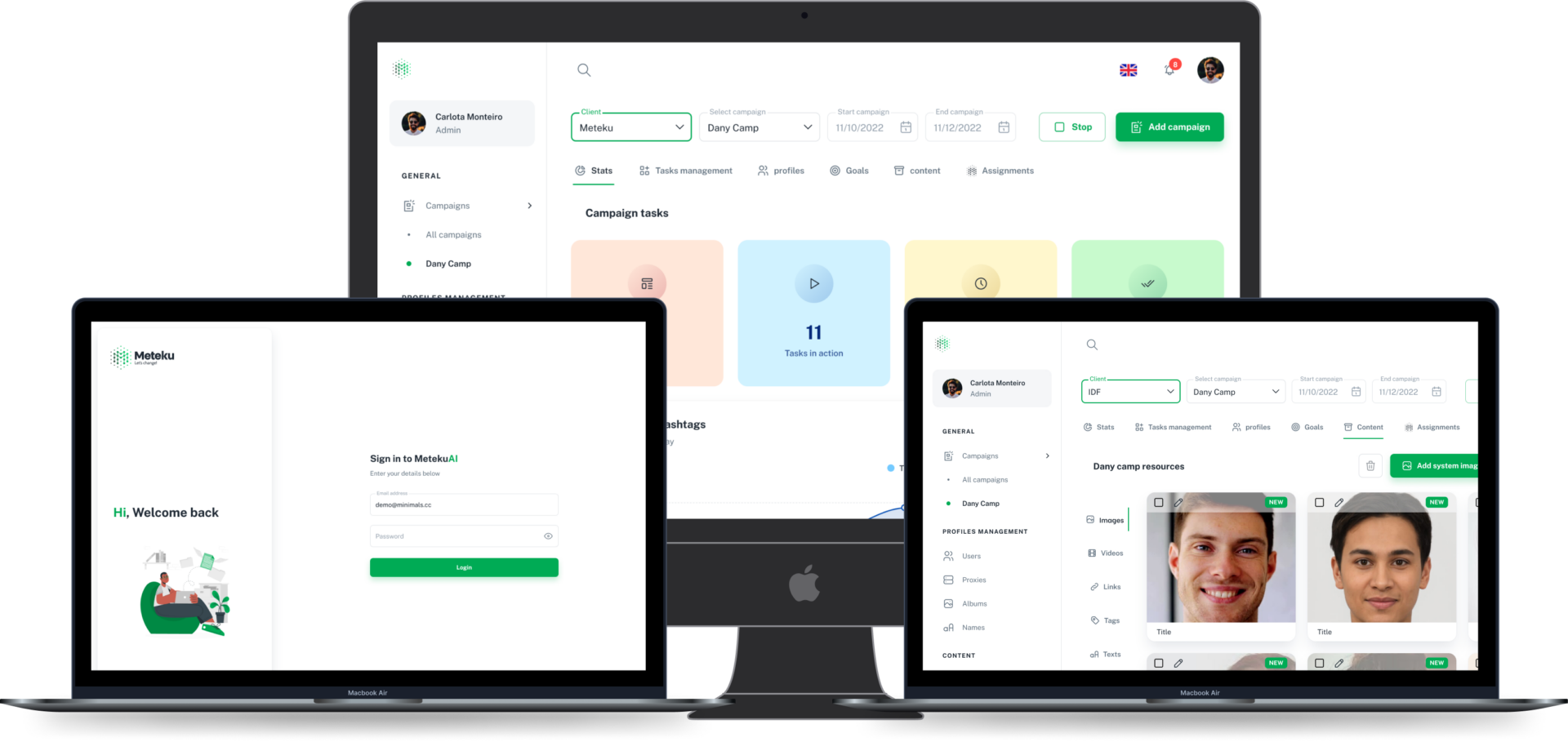

MetekuAI's orchestration platform allowed human operators to intervene in AI-driven workflows, but operators were over-riding the AI at three times the expected rate — introducing inefficiency rather than oversight. Mental model interviews with 20 operators revealed that the interface communicated AI confidence scores in a format that felt arbitrary, leading operators to distrust correct AI decisions.

The Solution

What We Built

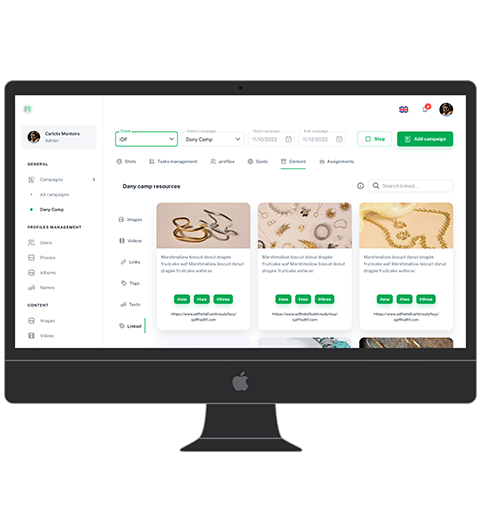

We ran a series of explainability research workshops, testing different confidence visualisation formats with operators across skill levels. A revised interface surfaced AI reasoning in plain language with contextual evidence, and a new IA structure distinguished clearly between monitoring, intervention, and review modes — giving operators a clear mental map of when human action was expected versus optional.

Results